Hello Experts,

- Sap Bods Tutorials

- Sap Bods Tutorial Point

- Key Generation Function In Sap Bods And Dogs

- Key Generation Function In Sap Bods Test

- Key Generation Function In Bods

- Key Generation In Bods

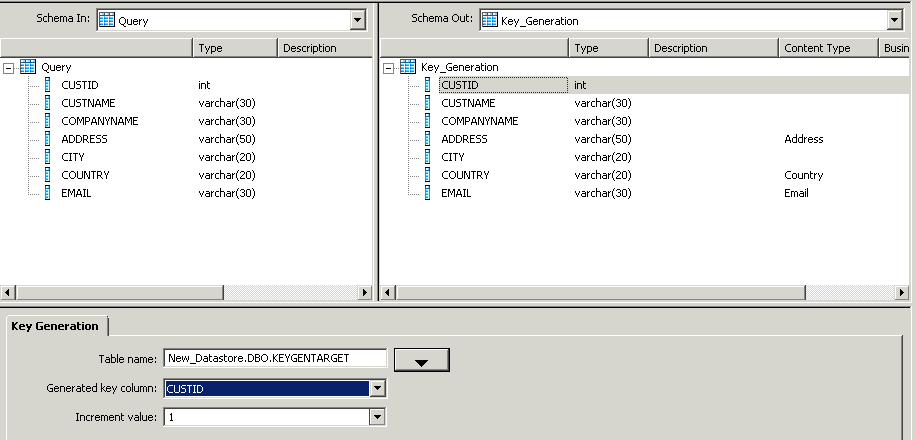

The following table lists the names and descriptions of functions, as well as the function's category in the function wizard and smart editor. Note For information about operators, functions, and transforms that you can use as push-down functions with Data Services, see SAP Note 2212730. Key Generation Transformation is used to generate artificial keys for new rows in a table. The transform looks up the maximum existing key value of the surrogate key column from the table and uses it as the starting value to generate new keys for new rows in the input data set. Jun 21, 2016 With my diving suit packed, loaded with imaginative visions, and lots of curiosity, started diving deep into the world of BODS.Lots of work is going on. Got attracted towards the “KeyGeneration” transform and was fascinated at its features.Now it was time for me to fuse and adapt myself into its world. THE KEYGENERATION TRANSFORM. Solution: SAP BODS has an inbuilt function which will let you create such a sequence and can be mapped inside a Query transform. Gen Rownum function in SAP BODS(Click to enlarge) Using this function and the below syntax, a sequence can be created for the scenario above. May 17, 2018 This tutorial shows you how to use the date generation function in SAP BO Data Services For more information please visit www.drivenbyanalytics.com. SAP BODS Training - Complete SAP BODS Video.

Sap Bods Tutorials

I was using gen_row_num_by_group function provided by Data Services in my project and after experimenting on it I recorded my observations and thought of sharing with you all.

May 26, 2015 Thanks for A2A Trupti Mayee Tripathy. I do not have much knowledge about SAP BODS but please find the below beginners guide I found: SAP BODS - Beginners guide.

Suppose you get a requirement to find the rank. Then BODS provides a function called gen_row_num_by_group which allots ranking to incoming rows.

But be wary of one thing when you use this function and that is, in layman language, all your incoming records should be properly arranged and in technical terms the column for which you’ll apply ranking should be ordered by first.

Let’s understand it by an example.

Consider my below source and target,

Mapping of Source to target is as follows:

First time when job is executed then records of target table are:

Now before jumping to gen_row_num_by_group let’s have a look at gen_row_num function.

GEN_ROW_NUM: – As the name suggests Generate Row Number so it assigns number to every incoming row each time when you execute your dataflow residing inside your job. By default it starts to increment the incoming records by 1 but if you map it like,

Gen_row_num() + 1 then for every incoming row it’ll add this ‘1’.

In general, it can be

Gen_row_num()+n

Where n=1,2,3,…

Let’s understand with an example,

I’ve added Result column and mapped it with gen_row_num() function as shown below:

So after executing the job my output looks like:

There are two keys associated with generating good images. Nov 04, 2014 Meet Eddie Woo, the maths teacher you wish you’d had in high school Australian Story - Duration: 28:09. ABC News In-depth Recommended for you. A Proposed Method for Generating a Private Key Using Digital Color Image Features. Wisam Abed Shukur. Baghdad University, College of Education for Pure Science/Ibn Al-Haitham. In public-key algorithms, there two different keys are used for encryption and decryption processes. The second key that used in decryption process cannot be derived.

See as I mentioned it added a sequence number for every incoming record.

Now let’s understand the functionality of gen_row_num_by_group.

Gen_Row_Num_By_Group: – This means make a group of similar records and then apply gen_row_num functionality. ( Ha ha this definition is created by myself. :))

Now as I said it’ll make a group for a specified column and then will assign row number to them.

I’ve mapped my result column with gen_row_num_by_group as shown:

I will provide you Microsoft Office 2010 Product Key. Key generator office 2010 free.

After executing below is my target table: –

So logically what it did?

For a group of similar incoming records it assigned row numbers.

Like for incoming records from 101 – 104 the column C2 had values ‘A’ so it assigned 1,2,3,4 to it.

Then from 105 the value of column C2 changed, i.e., it became AB, so it assigned it 1, record 106 also had the same value for C2 so it assigned it to number 2.

In a similar fashion it did for all incoming rows.

So ideally our function has assigned rank to the similar group but wait, check value of 109, it is having value ‘1’ but it should’ve value ‘5’ right as per the functionality of gen_row_num_by_group.

So it means I need to arrange my records first orderly.

Sap Bods Tutorial Point

So I apply order by as shown for the column which I want to assign the rank:

Again I execute my job and the output looks like:

But all in vain, again for C1 = 109 I’ve rank ‘1’ against it.

So this function,

Firstly applied gen_row_num_by_group functionality to all the incoming source records then applied order by and arranged the records in an order fashion.

See C2 which has value ‘A’ forms a group, ‘AB’ forms a group.

Similarly all similar records in C2 form a group.

Key Generation Function In Sap Bods And Dogs

By group I mean they are arranged orderly.

So to resolve this issue and to apply proper ranking to my incoming source I first need to arrange the incoming records in an ordered fashion and then apply the gen_row_num_by_group functionality.

So I modify my mapping as shown:

First order by,

Then gen_row_num_by_group functionality,

Finally after executing the job my target table looks like:

So you can see that all similar records of C2 are assigned a proper rank.

How it processed and assigned rank to the incoming data?

Key Generation Function In Sap Bods Test

Firstly it applied order by functionality to all the incoming records and arranged them in a similar group and then it applied ranking to it.

Hope I’m able to explain the functionality of gen_row_num_by_group and the way it processes the incoming data.

Please let me know if I’ve missed anything.

Thanks ?

Skip to end of metadataGo to start of metadataWhen you import a table with an Identity column it is treated like any other regular INT datatype column. And when you load it, an error is raised by the database: 'An explicit value kann not be inserted into an Identity column if IDENTITY_INSERT is set to OFF'.

But how can we load a table that has an Identity column?

First, simply do not set any column to type Identity. DI has the key generation transform to provide values for surrogate keys. It works similar to the identity column logic, take the highest key value and increment it by one. However, the Identity column checks the highest value for each and every row, Key Generation transform only once, at the beginning of the dataflow. So you cannot use the Key Generation if..

- two DI dataflows run in parallel loading the same table. Both will use the same key values.

- DI loading the table and at the same time some other application inserts data.

Key Generation Function In Bods

What is the problem with identity columns actually? DI adds them into the insert statement, even if they have a NULL value and SQL Server complaints. It wouldn't be a problem if SQL Server would look at the column value, figure there is a NULL value and hence ignore the column by itself. But no, the logic for SQL Server is: Identity column has to be omitted from the insert statement.

Actually, this can be accomplished in DI quite easily. DI does not base the insert/update/delete statement on the table schema, it does use the columns of the input schema. So all we have to do is to remove the identitiy column from the query before the table loader. And as this column is the physical primary key to the table, wher have to check the flag 'use input keys' in the table loader.

Just keep in mind, above works for insert statements only. In update statements the primary key column is not updated anyway, it is used in the where clause only. Hence, for deletes/updates not only is there no problem, but the key column is required there. That makes a dataflow with mixed inserts and updates quite ugly. We need two separate streams of data, updates go into the table unchanged, inserts are converted to normal rows so we can add a query downstream where the identity column is omitted.

A completely different approach is to do what the SQL Server error message advised us: To issue the command 'set IDENTITY_INSERT table ON'. This is a session command, so needs to be part of the session loading the data. A sql() function call cannot be used as this would be a session of its own. The only place where you can add that is the Preload Command in the table loader.

Key Generation In Bods

Neither of this solution is perfect. So long term a feature has to be implemented where the enduser simply can chose what method should be used, let DI generate the key or omit the identity column in inserts.